The readiness gap is a security architecture problem

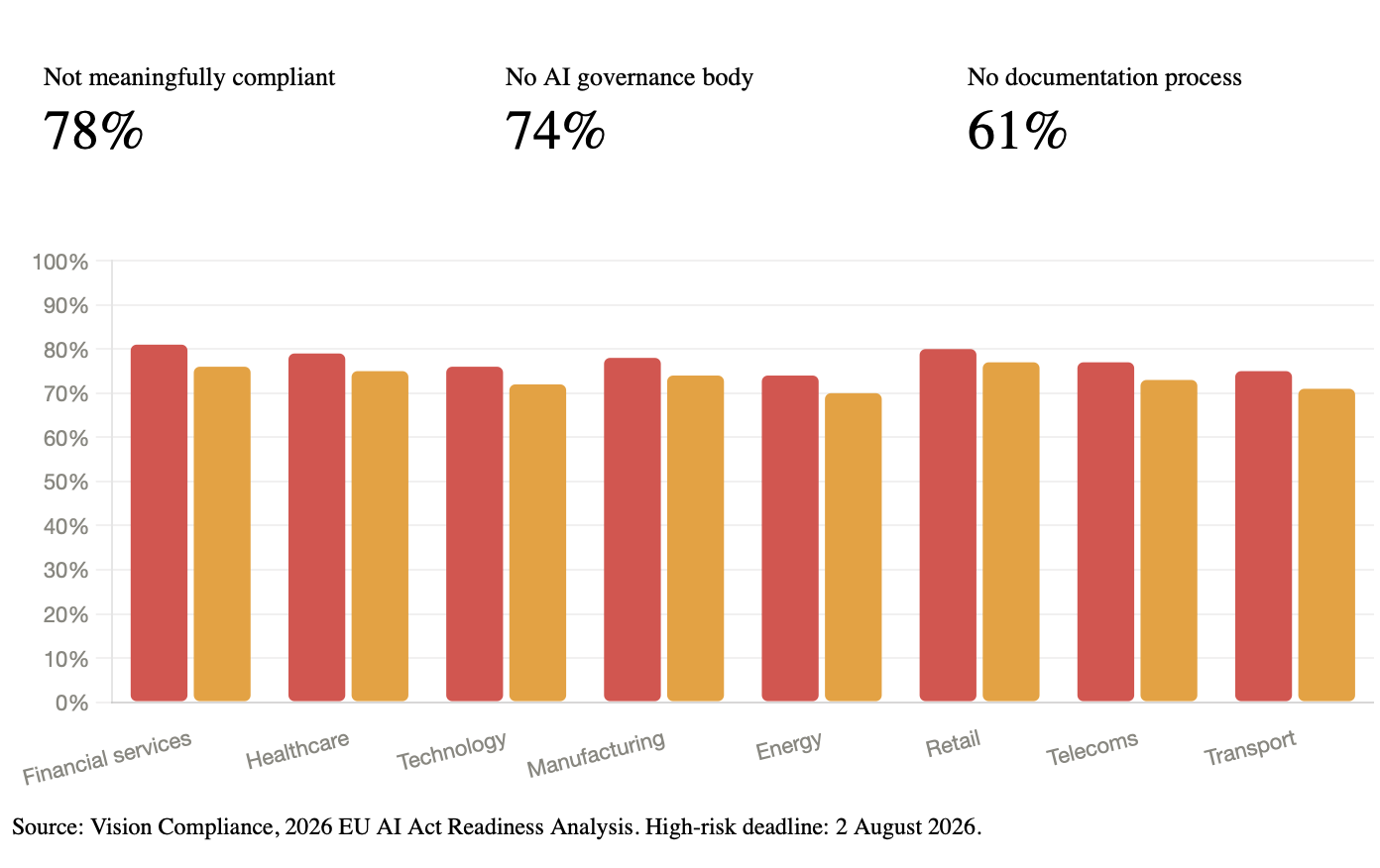

78% of organizations have not taken meaningful steps toward EU AI Act compliance, and the high-risk rules start applying on 2 August 2026. For teams deploying AI agents, this is not mainly a legal problem. It is a security architecture problem.

That would already be serious. It is more serious because the AI Act’s high-risk obligations start applying on August 2, 2026. The fine ceiling is EUR 35 million or 7% of global annual turnover.

The supporting numbers matter just as much:

- 74% of organizations have no dedicated AI compliance governance body.

- 61% have no process for producing the technical documentation the Act requires.

Source: Vision Compliance, 2026 EU AI Act Readiness Analysis. High-risk enforcement begins on 2 August 2026.

For agentic systems, this is not only a legal backlog. It is a security design failure. The controls the Act expects are the same controls security teams should already want: clear system boundaries, tool-level authorization, reliable audit trails, and a real human override path.

If you cannot explain what an agent can access, why it was allowed to act, and how to stop it safely, you do not just have a compliance problem. You have a security problem.

What the August 2026 deadline actually requires

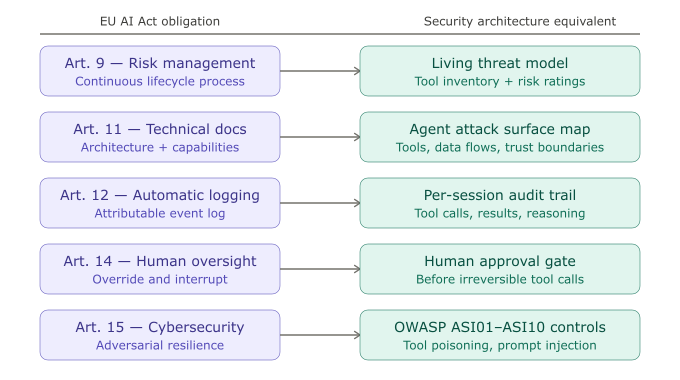

The AI Act is risk-based. For high-risk systems under Annex III, five articles matter most to agent deployments.

Article 9: Risk management. Risk management must be continuous. For an agent, that means the threat model changes every time you add a new tool, data source, workflow, or sub-agent.

Article 11: Technical documentation. Documentation must describe the system well enough for a regulator to assess it without relying on your engineering team to fill in the gaps.

Article 12: Automatic logging. Logs must support monitoring and incident investigation. They must make specific actions attributable to a specific system state.

Article 14: Human oversight. People must be able to interrupt, override, or shut down the system in practice, not just in policy.

Article 15: Cybersecurity. High-risk systems must be resilient to adversarial attacks, data poisoning, model manipulation, and abuse of outputs.

The five key articles map directly to engineering controls that secure agentic AI systems whether regulation exists or not.

The most revealing statistic in the Vision Compliance analysis may be the 61% with no documentation process. For an AI agent, Article 11 documentation is not paperwork. It is the system map: what the agent can do, what it can reach, what data it touches, and what conditions constrain its actions. If you cannot produce that document, you do not understand your own deployment.

Why AI agents make the gap worse

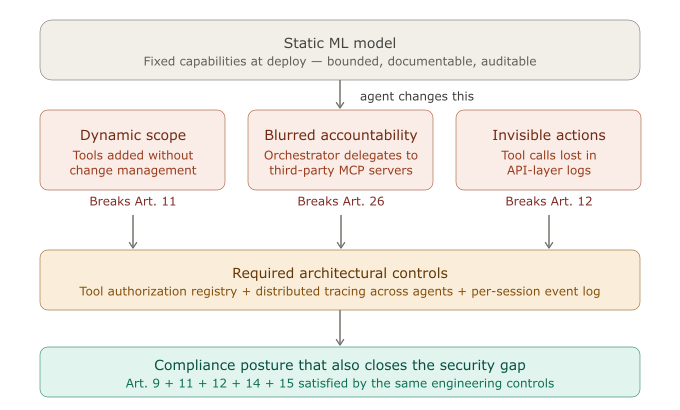

A traditional model is usually easier to classify, document, and audit. Agents are harder because the system boundary moves at runtime.

Each structural property of agentic AI creates a predictable compliance failure. The architectural fix is usually the same control security teams should already be building.

Tools change the system’s real capability set. An agent’s power is defined less by the model and more by the tools it can invoke. If tool access is broad, loosely governed, and changed without formal review, Article 9 and Article 11 become difficult to satisfy.

Multi-agent workflows blur accountability. Once an orchestrator delegates to worker agents or third-party MCP-connected services, it becomes harder to say who is responsible for which decision and which action. That creates both governance risk and incident-response confusion.

Default logs are not enough. LLM API logs may capture prompts and completions, but they usually do not capture tool calls, tool outputs, or the decision chain that links an action to a specific agent session. That is a direct Article 12 problem.

Human oversight has to be engineered. A policy that says “a human reviews critical actions” is not enough if the architecture cannot pause, interrupt, or roll back an agent that is already executing a multi-step workflow.

For teams already deploying agents, the readiness gap is usually wider because these systems combine more moving parts, less stable boundaries, and weaker default observability than conventional ML deployments.

Four questions every team should answer now

If you operate AI agents in an Annex III domain, your team should be able to answer these questions immediately.

- Can you enumerate every tool the agent can invoke, and the conditions under which each tool is authorized?

If the answer is “the agent can access everything in the MCP config,” you have both a security gap and a compliance gap.

- Does every state-changing action create a log entry tied to a specific agent session?

Writing to a database, sending a message, approving a transaction, or triggering a downstream process should all be attributable. Standard API logs are not enough.

- Can a human interrupt or override the agent before it finishes its task chain?

If stopping the agent means killing the process and hoping no irreversible action has already happened, Article 14 is not satisfied.

- Can you hand someone a clear document that explains the agent’s architecture, capabilities, data access, and decision path?

If your only documentation is the system prompt, repo, and tribal knowledge in Slack, you are not ready for Article 11.

The deadline is four months away. The preparation work is measured in months, not weeks. For organizations that have not started, the math is already difficult.

Bottom line

The organizations that close this gap fastest will not treat the AI Act as a paperwork exercise.

They will treat:

- Article 9 as a living threat model.

- Article 11 as a security architecture review.

- Article 12 as audit-grade telemetry.

- Article 14 as an engineered human control point.

- Article 15 as a core resilience requirement.

That is the useful mental model for security leaders: compliance and security are not separate tracks for agentic AI. In practice, they are the same work.

The legal deadline is August 2, 2026. The operational deadline is earlier: before your agent makes its first consequential mistake without a log trail, an override path, or a documented risk assessment.

Go deeper

If this article matches what you are seeing inside real deployments, the next step is not more theory. It is implementing the controls that close the gap: tool authorization, MCP trust boundaries, agent audit trails, and human approval gates.

Those are the focus of the live cohort course I am running this spring.

The free workshop on April 29 covers the attack surface side: how agents fail when these controls are missing.

Register at newsletter.aminrj.com — workshop link goes to subscribers first.

References:

Amine Raji is an AI security practitioner with 15+ years in banking, defense, and automotive security. He writes about agentic AI attack surfaces, MCP security, and the OWASP Agentic Top 10 at aminrj.com.